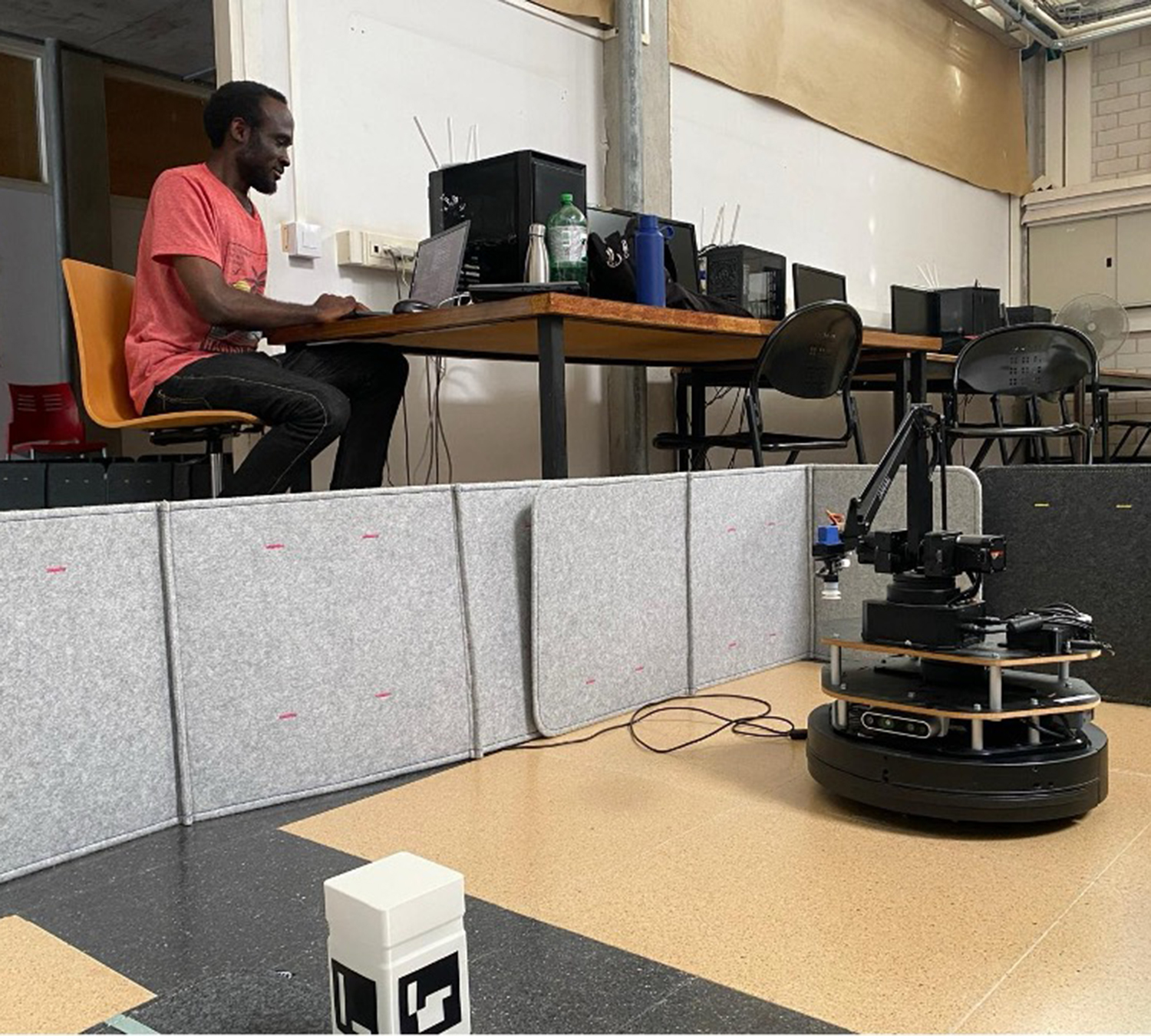

Muhammad Faran Akram and Nwafor Solomon Chibuzo, students of the IFRoS 2024-2026 cohort, present their final integration project from the first year of the master's program. They developed and integrated modules that enabled a mobile robot to autonomously navigate and execute tasks in an unknown environment. This work demonstrates the practical application of core robotics principles learned in the program, including perception, planning, control, and system integration. Below, they explain their process and what they learned.

“Hi, we are Muhammad Faran Akram and Nwafor Solomon Chibuzo. We are excited to share the integration project that concluded our first year in the IFRoS Master. The main goal was to combine the key skills we learned across different courses—perception, planning, and intervention—into a single, functioning autonomous system.

The challenge was to program a TurtleBot 2 mobile robot, equipped with a 4-degree-of-freedom robotic arm, to perform a complete task without human guidance. The robot had to start with no prior map of its environment and execute the following mission:

- Explore the unknown area and create a map of it.

- During exploration, search for and detect a specific object marked with an ArUco tag.

- Once found, approach the object, pick it up using the manipulator.

- Return to its initial starting position to drop off the object.

To solve this, we developed a system coordinated by Behavior Trees, which allowed the robot to switch between different tasks logically. Our solution was built on three main components:

- Exploration and Mapping: For the robot to navigate an unknown space, we used a 'Next-Best-View' (NBV) approach. This algorithm allows the robot to analyze its current map and intelligently decide on the best direction to move to discover the most new information. The map was built in real-time using data from the robot's RGB-D camera.

- Path Planning with Physical Constraints: A robot can't just follow any line on a map. We used the RRT (Rapidly-exploring Random Tree) algorithm to find a valid path to a goal. To make the movement smooth and physically possible for the TurtleBot, we generated Dubins curves, which respect the robot's nonholonomic (car-like) motion constraints.

- Object Detection and Manipulation: While exploring, the robot’s perception system was always active, looking for the ArUco marker. When the marker was detected, the system’s priority shifted from exploration to manipulation. It planned a precise path to the object, and a task-priority control framework guided the robotic arm to accurately approach, grasp, and lift it, all while staying within its joint limits.

This project was a valuable hands-on experience. It moved our understanding from theoretical concepts to practical implementation, which is the core of the IFRoS program. Integrating different software components and seeing them work together on real hardware taught us how to tackle the complex, real-world challenges found in robotics.

We are very grateful for the support and guidance from our professors at the Universitat de Girona (UdG) who helped make this project a success.

You can see a full demonstration of our robot completing its mission in the video below: https://www.youtube.com/watch?v=pwI8Y_kNI7w&t=8s